29. Robustness#

In addition to what’s in Anaconda, this lecture will need the following libraries:

!pip install --upgrade quantecon

29.1. Overview#

This lecture modifies a Bellman equation to express a decision-maker’s doubts about transition dynamics.

His specification doubts make the decision-maker want a robust decision rule.

Robust means insensitive to misspecification of transition dynamics.

The decision-maker has a single approximating model of the transition dynamics.

He calls it approximating to acknowledge that he doesn’t completely trust it.

He fears that transition dynamics are actually determined by another model that he cannot describe explicitly.

All that he knows is that the actual data-generating model is in some (uncountable) set of models that surrounds his approximating model.

He quantifies the discrepancy between his approximating model and the genuine data-generating model by using a quantity called entropy.

(We’ll explain what entropy means below)

He wants a decision rule that will work well enough no matter which of those other models actually governs outcomes.

This is what it means for his decision rule to be “robust to misspecification of an approximating model”.

This may sound like too much to ask for, but \(\ldots\).

\(\ldots\) a secret weapon is available to design robust decision rules.

The secret weapon is max-min control theory.

A value-maximizing decision-maker enlists the aid of an (imaginary) value-minimizing model chooser to construct bounds on the value attained by a given decision rule under different models of the transition dynamics.

The original decision-maker uses those bounds to construct a decision rule with an assured performance level, no matter which model actually governs outcomes.

Note

In reading this lecture, please don’t think that our decision-maker is paranoid when he conducts a worst-case analysis. By designing a rule that works well against a worst-case, his intention is to construct a rule that will work well across a set of models.

Let’s start with some imports:

import pandas as pd

import numpy as np

from scipy.linalg import eig

import matplotlib.pyplot as plt

import quantecon as qe

29.1.1. Sets of models imply sets of values#

Our “robust” decision-maker wants to know how well a given rule will work when he does not know a single transition law \(\ldots\).

\(\ldots\) he wants to know sets of values that will be attained by a given decision rule \(F\) under a set of transition laws.

Ultimately, he wants to design a decision rule \(F\) that shapes the set of values in ways that he prefers.

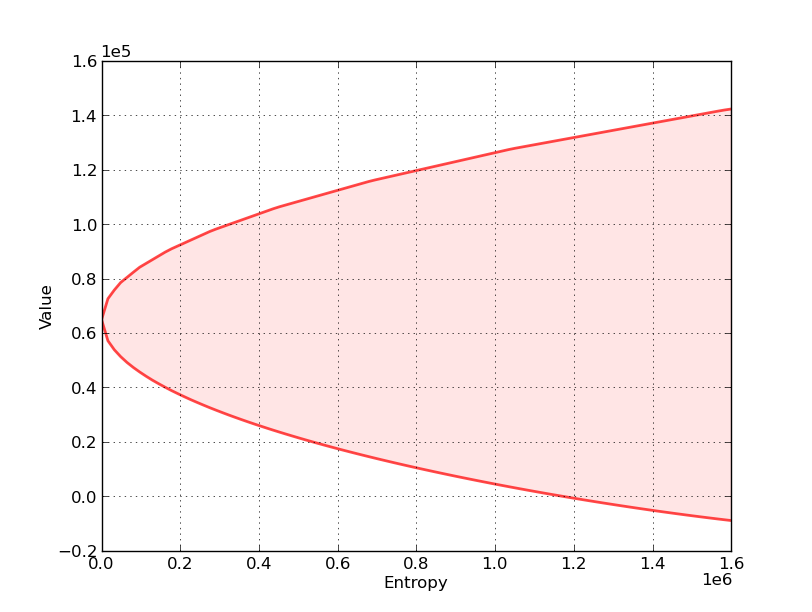

With this in mind, consider the following graph, which relates to a particular decision problem to be explained below

The figure shows a value-entropy correspondence for a particular decision rule \(F\).

The shaded set is the graph of the correspondence, which maps entropy to a set of values associated with a set of models that surround the decision-maker’s approximating model.

Here

Value refers to a sum of discounted rewards obtained by applying the decision rule \(F\) when the state starts at some fixed initial state \(x_0\).

Entropy is a non-negative number that measures the size of a set of models surrounding the decision-maker’s approximating model.

Entropy is zero when the set includes only the approximating model, indicating that the decision-maker completely trusts the approximating model.

Entropy is bigger, and the set of surrounding models is bigger, the less the decision-maker trusts the approximating model of the transition dynamics.

The shaded region indicates that for all models having entropy less than or equal to the number on the horizontal axis, the value obtained will be somewhere within the indicated set of values.

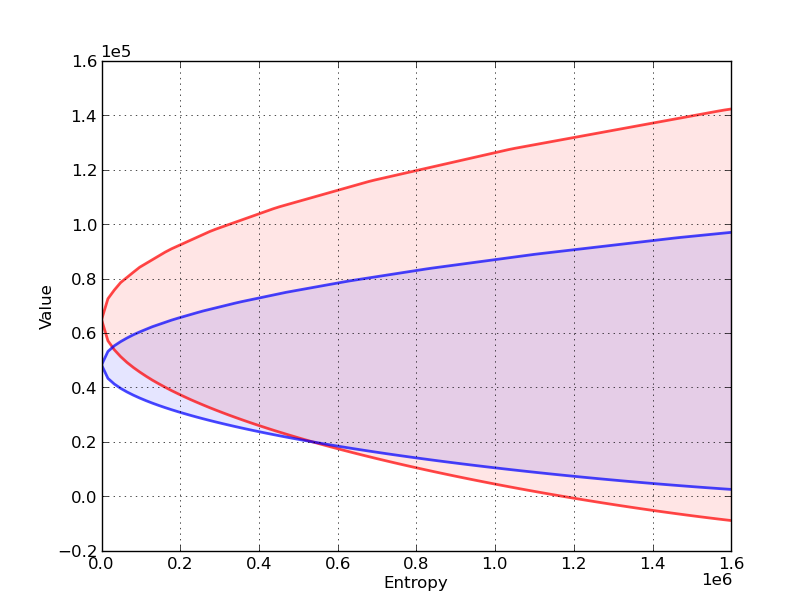

Now let’s compare sets of values associated with two different decision rules, \(F_r\) and \(F_b\).

In the next figure,

The red set shows the value-entropy correspondence for decision rule \(F_r\).

The blue set shows the value-entropy correspondence for decision rule \(F_b\).

The blue correspondence is skinnier than the red correspondence.

This conveys the sense in which the decision rule \(F_b\) is more robust than the decision rule \(F_r\)

more robust means that the set of values is less sensitive to increasing misspecification as measured by entropy

Notice that the less robust rule \(F_r\) promises higher values for small misspecifications (small entropy).

(But it is more fragile in the sense that it is more sensitive to perturbations of the approximating model)

Below we’ll explain in detail how to construct these sets of values for a given \(F\), but for now \(\ldots\).

Here is a hint about the secret weapons we’ll use to construct these sets

We’ll use some min problems to construct the lower bounds

We’ll use some max problems to construct the upper bounds

We will also describe how to choose \(F\) to shape the sets of values.

This will involve crafting a skinnier set at the cost of a lower level (at least for low values of entropy).

29.1.2. Inspiring video#

If you want to understand more about why one serious quantitative researcher is interested in this approach, we recommend Lars Peter Hansen’s Nobel lecture.

29.1.3. Other references#

Our discussion in this lecture is based on

29.2. The model#

For simplicity, we present ideas in the context of a class of problems with linear transition laws and quadratic objective functions.

To fit in with our earlier lecture on LQ control, we will treat loss minimization rather than value maximization.

To begin, recall the infinite horizon LQ problem, where an agent chooses a sequence of controls \(\{u_t\}\) to minimize

subject to the linear law of motion

As before,

\(x_t\) is \(n \times 1\), \(A\) is \(n \times n\)

\(u_t\) is \(k \times 1\), \(B\) is \(n \times k\)

\(w_t\) is \(j \times 1\), \(C\) is \(n \times j\)

\(R\) is \(n \times n\) and \(Q\) is \(k \times k\)

Here \(x_t\) is the state, \(u_t\) is the control, and \(w_t\) is a shock vector.

For now, we take \(\{ w_t \} := \{ w_t \}_{t=1}^{\infty}\) to be deterministic — a single fixed sequence.

We also allow for model uncertainty on the part of the agent solving this optimization problem.

In particular, the agent takes \(w_t = 0\) for all \(t \geq 0\) as a benchmark model but admits the possibility that this model might be wrong.

As a consequence, she also considers a set of alternative models expressed in terms of sequences \(\{ w_t \}\) that are more or less “close” to the zero sequence.

She seeks a policy that will do well enough for a set of alternative models whose members are pinned down by sequences \(\{ w_t \}\).

A sequence \(\{ w_t \}\) might represent

nonlinearities absent from the approximating model

time variations in parameters of the approximating model

omitted state variables in the approximating model

neglected history dependencies \(\ldots\)

and other potential sources of misspecification

Soon we’ll quantify the quality of a model specification in terms of the maximal size of the discounted sum \(\sum_{t=0}^{\infty} \beta^{t+1}w_{t+1}' w_{t+1}\).

29.3. Constructing more robust policies#

If our agent takes \(\{ w_t \}\) as a given deterministic sequence, then, drawing on ideas in earlier lectures on dynamic programming, we can anticipate Bellman equations such as

(Here \(J\) depends on \(t\) because the sequence \(\{w_t\}\) is not recursive)

Our tool for studying robustness is to construct a rule that works well even if an adverse sequence \(\{ w_t \}\) occurs.

In our framework, “adverse” means “loss increasing”.

As we’ll see, this will eventually lead us to construct a Bellman equation

Notice that we’ve added the penalty term \(- \theta w'w\).

Since \(w'w = \| w \|^2\), this term becomes influential when \(w\) moves away from the origin.

The penalty parameter \(\theta\) controls how much we penalize the maximizing agent for “harming” the minimizing agent.

By raising \(\theta\) more and more, we more and more limit the ability of maximizing agent to distort outcomes relative to the approximating model.

So bigger \(\theta\) is implicitly associated with smaller distortion sequences \(\{w_t \}\).

29.3.1. Analyzing the Bellman equation#

So what does \(J\) in (29.3) look like?

As with the ordinary LQ control model, \(J\) takes the form \(J(x) = x' P x\) for some symmetric positive definite matrix \(P\).

One of our main tasks will be to analyze and compute the matrix \(P\).

Related tasks will be to study associated feedback rules for \(u_t\) and \(w_{t+1}\).

First, using matrix calculus, you will be able to verify that

where

and \(I\) is a \(j \times j\) identity matrix. Substituting this expression for the maximum into (29.3) yields

Using similar mathematics, the solution to this minimization problem is \(u = - F x\) where \(F := (Q + \beta B' \mathcal D (P) B)^{-1} \beta B' \mathcal D(P) A\).

Substituting this minimizer back into (29.6) and working through the algebra gives \(x' P x = x' \mathcal B ( \mathcal D( P )) x\) for all \(x\), or, equivalently,

where \(\mathcal D\) is the operator defined in (29.5) and

The operator \(\mathcal B\) is the standard (i.e., non-robust) LQ Bellman operator, and \(P = \mathcal B(P)\) is the standard matrix Riccati equation coming from the Bellman equation — see this discussion.

Under some regularity conditions (see [Hansen and Sargent, 2008]), the operator \(\mathcal B \circ \mathcal D\) has a unique positive definite fixed point, which we denote below by \(\hat P\).

A robust policy, indexed by \(\theta\), is \(u = - \hat F x\) where

We also define

The interpretation of \(\hat K\) is that \(w_{t+1} = \hat K x_t\) on the worst-case path of \(\{x_t \}\), in the sense that this vector is the maximizer of (29.4) evaluated at the fixed rule \(u = - \hat F x\).

Note that \(\hat P, \hat F, \hat K\) are all determined by the primitives and \(\theta\).

Note also that if \(\theta\) is very large, then \(\mathcal D\) is approximately equal to the identity mapping.

Hence, when \(\theta\) is large, \(\hat P\) and \(\hat F\) are approximately equal to their standard LQ values.

Furthermore, when \(\theta\) is large, \(\hat K\) is approximately equal to zero.

Conversely, smaller \(\theta\) is associated with greater fear of model misspecification and greater concern for robustness.

29.4. Robustness as outcome of a two-person zero-sum game#

What we have done above can be interpreted in terms of a two-person zero-sum game in which \(\hat F, \hat K\) are Nash equilibrium objects.

Agent 1 is our original agent, who seeks to minimize loss in the LQ program while admitting the possibility of misspecification.

Agent 2 is an imaginary malevolent player.

Agent 2’s malevolence helps the original agent to compute bounds on his value function across a set of models.

We begin with agent 2’s problem.

29.4.1. Agent 2’s problem#

Agent 2

knows a fixed policy \(F\) specifying the behavior of agent 1, in the sense that \(u_t = - F x_t\) for all \(t\)

responds by choosing a shock sequence \(\{ w_t \}\) from a set of paths sufficiently close to the benchmark sequence \(\{0, 0, 0, \ldots\}\)

A natural way to say “sufficiently close to the zero sequence” is to restrict the summed inner product \(\sum_{t=1}^{\infty} w_t' w_t\) to be small.

However, to obtain a time-invariant recursive formulation, it turns out to be convenient to restrict a discounted inner product

Now let \(F\) be a fixed policy, and let \(J_F(x_0, \mathbf w)\) be the present-value cost of that policy given sequence \(\mathbf w := \{w_t\}\) and initial condition \(x_0 \in \mathbb R^n\).

Substituting \(-F x_t\) for \(u_t\) in (29.1), this value can be written as

where

and the initial condition \(x_0\) is as specified in the left side of (29.10).

Agent 2 chooses \({\mathbf w}\) to maximize agent 1’s loss \(J_F(x_0, \mathbf w)\) subject to (29.9).

Using a Lagrangian formulation, we can express this problem as

where \(\{x_t\}\) satisfied (29.11) and \(\theta\) is a Lagrange multiplier on constraint (29.9).

For the moment, let’s take \(\theta\) as fixed, allowing us to drop the constant \(\beta \theta \eta\) term in the objective function, and hence write the problem as

or, equivalently,

subject to (29.11).

What’s striking about this optimization problem is that it is once again an LQ discounted dynamic programming problem, with \(\mathbf w = \{ w_t \}\) as the sequence of controls.

The expression for the optimal policy can be found by applying the usual LQ formula (see here).

We denote it by \(K(F, \theta)\), with the interpretation \(w_{t+1} = K(F, \theta) x_t\).

The remaining step for agent 2’s problem is to set \(\theta\) to enforce the constraint (29.9), which can be done by choosing \(\theta = \theta_{\eta}\) such that

Here \(x_t\) is given by (29.11) — which in this case becomes \(x_{t+1} = (A - B F + CK(F, \theta)) x_t\).

29.4.2. Using Agent 2’s problem to construct bounds on value sets#

29.4.2.1. The lower bound#

Define the minimized object on the right side of problem (29.12) as \(R_\theta(x_0, F)\).

Because “minimizers minimize” we have

where \(x_{t+1} = (A - B F + CK(F, \theta)) x_t\) and \(x_0\) is a given initial condition.

This inequality in turn implies the inequality

where

The left side of inequality (29.14) is a straight line with slope \(-\theta\).

Technically, it is a “separating hyperplane”.

At a particular value of entropy, the line is tangent to the lower bound of values as a function of entropy.

In particular, the lower bound on the left side of (29.14) is attained when

To construct the lower bound on the set of values associated with all perturbations \({\mathbf w}\) satisfying the entropy constraint (29.9) at a given entropy level, we proceed as follows:

For a given \(\theta\), solve the minimization problem (29.12).

Compute the minimizer \(R_\theta(x_0, F)\) and the associated entropy using (29.15).

Compute the lower bound on the value function \(R_\theta(x_0, F) - \theta \ {\rm ent}\) and plot it against \({\rm ent}\).

Repeat the preceding three steps for a range of values of \(\theta\) to trace out the lower bound.

Note

This procedure sweeps out a set of separating hyperplanes indexed by different values for the Lagrange multiplier \(\theta\).

29.4.2.2. The upper bound#

To construct an upper bound we use a very similar procedure.

We simply replace the minimization problem (29.12) with the maximization problem

where now \(\tilde \theta >0\) penalizes the choice of \({\mathbf w}\) with larger entropy.

(Notice that \(\tilde \theta = - \theta\) in problem (29.12))

Because “maximizers maximize” we have

which in turn implies the inequality

where

The left side of inequality (29.17) is a straight line with slope \(\tilde \theta\).

The upper bound on the left side of (29.17) is attained when

To construct the upper bound on the set of values associated all perturbations \({\mathbf w}\) with a given entropy we proceed much as we did for the lower bound

For a given \(\tilde \theta\), solve the maximization problem (29.16).

Compute the maximizer \(V_{\tilde \theta}(x_0, F)\) and the associated entropy using (29.18).

Compute the upper bound on the value function \(V_{\tilde \theta}(x_0, F) + \tilde \theta \ {\rm ent}\) and plot it against \({\rm ent}\).

Repeat the preceding three steps for a range of values of \(\tilde \theta\) to trace out the upper bound.

29.4.2.3. Reshaping the set of values#

Now in the interest of reshaping these sets of values by choosing \(F\), we turn to agent 1’s problem.

29.4.3. Agent 1’s problem#

Now we turn to agent 1, who solves

where \(\{ w_{t+1} \}\) satisfies \(w_{t+1} = K x_t\).

In other words, agent 1 minimizes

subject to

Once again, the expression for the optimal policy can be found here — we denote it by \(\tilde F\).

29.4.4. Nash equilibrium#

Clearly, the \(\tilde F\) we have obtained depends on \(K\), which, in agent 2’s problem, depended on an initial policy \(F\).

Holding all other parameters fixed, we can represent this relationship as a mapping \(\Phi\), where

The map \(F \mapsto \Phi (K(F, \theta))\) corresponds to a situation in which

agent 1 uses an arbitrary initial policy \(F\)

agent 2 best responds to agent 1 by choosing \(K(F, \theta)\)

agent 1 best responds to agent 2 by choosing \(\tilde F = \Phi (K(F, \theta))\)

As you may have already guessed, the robust policy \(\hat F\) defined in (29.7) is a fixed point of the mapping \(\Phi\).

In particular, for any given \(\theta\),

\(K(\hat F, \theta) = \hat K\), where \(\hat K\) is as given in (29.8)

\(\Phi(\hat K) = \hat F\)

A sketch of the proof is given in the appendix.

29.5. The stochastic case#

Now we turn to the stochastic case, where the sequence \(\{w_t\}\) is treated as an IID sequence of random vectors.

In this setting, we suppose that our agent is uncertain about the conditional probability distribution of \(w_{t+1}\).

The agent takes the standard normal distribution \(N(0, I)\) as the baseline conditional distribution, while admitting the possibility that other “nearby” distributions prevail.

These alternative conditional distributions of \(w_{t+1}\) might depend nonlinearly on the history \(x_s, s \leq t\).

To implement this idea, we need a notion of what it means for one distribution to be near another one.

Here we adopt a very useful measure of closeness for distributions known as the relative entropy, or Kullback-Leibler divergence.

For densities \(p, q\), the Kullback-Leibler divergence of \(q\) from \(p\) is defined as

Using this notation, we replace (29.3) with the stochastic analog

Here \(\mathcal P\) represents the set of all densities on \(\mathbb R^n\) and \(\phi\) is the benchmark distribution \(N(0, I)\).

The distribution \(\phi\) is chosen as the least desirable conditional distribution in terms of next period outcomes, while taking into account the penalty term \(\theta D_{KL}(\psi, \phi)\).

This penalty term plays a role analogous to the one played by the deterministic penalty \(\theta w'w\) in (29.3), since it discourages large deviations from the benchmark.

29.5.1. Solving the model#

The maximization problem in (29.22) appears highly nontrivial — after all, we are maximizing over an infinite dimensional space consisting of the entire set of densities.

However, it turns out that the solution is tractable, and in fact also falls within the class of normal distributions.

First, we note that \(J\) has the form \(J(x) = x' P x + d\) for some positive definite matrix \(P\) and constant real number \(d\).

Moreover, it turns out that if \((I - \theta^{-1} C' P C)^{-1}\) is nonsingular, then

where

and the maximizer is the Gaussian distribution

Substituting the expression for the maximum into Bellman equation (29.22) and using \(J(x) = x'Px + d\) gives

Since constant terms do not affect minimizers, the solution is the same as (29.6), leading to

To solve this Bellman equation, we take \(\hat P\) to be the positive definite fixed point of \(\mathcal B \circ \mathcal D\).

In addition, we take \(\hat d\) as the real number solving \(d = \beta \, [d + \kappa(\theta, P)]\), which is

The robust policy in this stochastic case is the minimizer in (29.25), which is once again \(u = - \hat F x\) for \(\hat F\) given by (29.7).

Substituting the robust policy into (29.24) we obtain the worst-case shock distribution:

where \(\hat K\) is given by (29.8).

Note that the mean of the worst-case shock distribution is equal to the same worst-case \(w_{t+1}\) as in the earlier deterministic setting.

29.5.2. Computing other quantities#

Before turning to implementation, we briefly outline how to compute several other quantities of interest.

29.5.2.1. Worst-case value of a policy#

One thing we will be interested in doing is holding a policy fixed and computing the discounted loss associated with that policy.

So let \(F\) be a given policy and let \(J_F(x)\) be the associated loss, which, by analogy with (29.22), satisfies

Writing \(J_F(x) = x'P_Fx + d_F\) and applying the same argument used to derive (29.23) we get

To solve this we take \(P_F\) to be the fixed point

and

If you skip ahead to the appendix, you will be able to verify that \(-P_F\) is the solution to the Bellman equation in agent 2’s problem discussed above — we use this in our computations.

29.6. Implementation#

The QuantEcon.py package provides a class called RBLQ for implementation of robust LQ optimal control.

The code can be found on GitHub.

Here is a brief description of the methods of the class

d_operator()andb_operator()implement \(\mathcal D\) and \(\mathcal B\) respectivelyrobust_rule()androbust_rule_simple()both solve for the triple \(\hat F, \hat K, \hat P\), as described in equations (29.7) – (29.8) and the surrounding discussionrobust_rule()is more efficientrobust_rule_simple()is more transparent and easier to follow

K_to_F()andF_to_K()solve the decision problems of agent 1 and agent 2 respectivelycompute_deterministic_entropy()computes the left-hand side of (29.13)evaluate_F()computes the loss and entropy associated with a given policy — see this discussion

29.7. Application#

Let us consider a monopolist similar to this one, but now facing model uncertainty.

The inverse demand function is \(p_t = a_0 - a_1 y_t + d_t\).

where

and all parameters are strictly positive.

The period return function for the monopolist is

Its objective is to maximize expected discounted profits, or, equivalently, to minimize \(\mathbb E \sum_{t=0}^\infty \beta^t (- r_t)\).

To form a linear regulator problem, we take the state and control to be

Setting \(b := (a_0 - c) / 2\) we define

For the transition matrices, we set

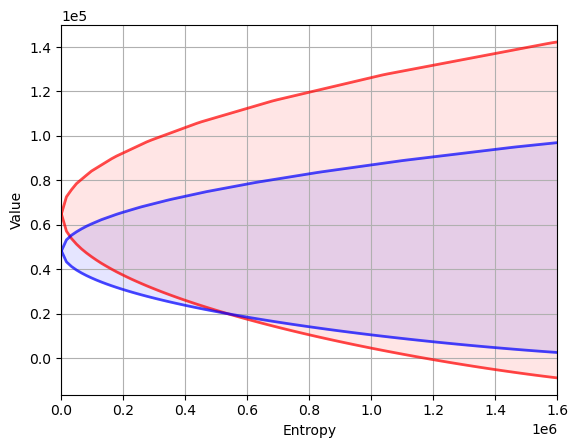

Our aim is to compute the value-entropy correspondences shown above.

The parameters are

The standard normal distribution for \(w_t\) is understood as the agent’s baseline, with uncertainty parameterized by \(\theta\).

We compute value-entropy correspondences for two policies

The no concern for robustness policy \(F_0\), which is the ordinary LQ loss minimizer.

A “moderate” concern for robustness policy \(F_b\), with \(\theta = 0.02\).

The code for producing the graph shown above, with blue being for the robust policy, is as follows

# Model parameters

a_0 = 100

a_1 = 0.5

ρ = 0.9

σ_d = 0.05

β = 0.95

c = 2

γ = 50.0

θ = 0.02

ac = (a_0 - c) / 2.0

# Define LQ matrices

R = np.array([[0., ac, 0.],

[ac, -a_1, 0.5],

[0., 0.5, 0.]])

R = -R # For minimization

Q = γ / 2

A = np.array([[1., 0., 0.],

[0., 1., 0.],

[0., 0., ρ]])

B = np.array([[0.],

[1.],

[0.]])

C = np.array([[0.],

[0.],

[σ_d]])

# ----------------------------------------------------------------------- #

# Functions

# ----------------------------------------------------------------------- #

def evaluate_policy(θ, F):

"""

Given θ (scalar, dtype=float) and policy F (array_like), returns the

value associated with that policy under the worst case path for {w_t},

as well as the entropy level.

"""

rlq = qe.RBLQ(Q, R, A, B, C, β, θ)

K_F, P_F, d_F, O_F, o_F = rlq.evaluate_F(F)

x0 = np.array([[1.], [0.], [0.]])

value = - x0.T @ P_F @ x0 - d_F

entropy = x0.T @ O_F @ x0 + o_F

return list(map(float, (value.item(), entropy.item())))

def value_and_entropy(emax, F, bw, grid_size=1000):

"""

Compute the value function and entropy levels for a θ path

increasing until it reaches the specified target entropy value.

Parameters

==========

emax: scalar

The target entropy value

F: array_like

The policy function to be evaluated

bw: str

A string specifying whether the implied shock path follows best

or worst assumptions. The only acceptable values are 'best' and

'worst'.

Returns

=======

df: pd.DataFrame

A pandas DataFrame containing the value function and entropy

values up to the emax parameter. The columns are 'value' and

'entropy'.

"""

if bw == 'worst':

θs = 1 / np.linspace(1e-8, 1000, grid_size)

else:

θs = -1 / np.linspace(1e-8, 1000, grid_size)

df = pd.DataFrame(index=θs, columns=('value', 'entropy'))

for θ in θs:

df.loc[θ] = evaluate_policy(θ, F)

if df.loc[θ, 'entropy'] >= emax:

break

df = df.dropna(how='any')

return df

# ------------------------------------------------------------------------ #

# Main

# ------------------------------------------------------------------------ #

# Compute the optimal rule

optimal_lq = qe.LQ(Q, R, A, B, C, beta=β)

Po, Fo, do = optimal_lq.stationary_values()

# Compute a robust rule given θ

baseline_robust = qe.RBLQ(Q, R, A, B, C, β, θ)

Fb, Kb, Pb = baseline_robust.robust_rule()

# Check the positive definiteness of worst-case covariance matrix to

# ensure that θ exceeds the breakdown point

test_matrix = np.identity(Pb.shape[0]) - (C.T @ Pb @ C) / θ

eigenvals, eigenvecs = eig(test_matrix)

assert (eigenvals >= 0).all(), 'θ below breakdown point.'

emax = 1.6e6

optimal_best_case = value_and_entropy(emax, Fo, 'best')

robust_best_case = value_and_entropy(emax, Fb, 'best')

optimal_worst_case = value_and_entropy(emax, Fo, 'worst')

robust_worst_case = value_and_entropy(emax, Fb, 'worst')

fig, ax = plt.subplots()

ax.set_xlim(0, emax)

ax.set_ylabel("Value")

ax.set_xlabel("Entropy")

ax.grid()

for axis in 'x', 'y':

plt.ticklabel_format(style='sci', axis=axis, scilimits=(0, 0))

plot_args = {'lw': 2, 'alpha': 0.7}

colors = 'r', 'b'

df_pairs = ((optimal_best_case, optimal_worst_case),

(robust_best_case, robust_worst_case))

class Curve:

def __init__(self, x, y):

self.x, self.y = x, y

def __call__(self, z):

return np.interp(z, self.x, self.y)

for c, df_pair in zip(colors, df_pairs):

curves = []

for df in df_pair:

# Plot curves

x, y = df['entropy'], df['value']

x, y = (np.asarray(a, dtype='float') for a in (x, y))

egrid = np.linspace(0, emax, 100)

curve = Curve(x, y)

print(ax.plot(egrid, curve(egrid), color=c, **plot_args))

curves.append(curve)

# Color fill between curves

ax.fill_between(egrid,

curves[0](egrid),

curves[1](egrid),

color=c, alpha=0.1)

plt.show()

[<matplotlib.lines.Line2D object at 0x7fdc7c41ac10>]

[<matplotlib.lines.Line2D object at 0x7fdc7c21efd0>]

[<matplotlib.lines.Line2D object at 0x7fdc7c21ee90>]

[<matplotlib.lines.Line2D object at 0x7fdc7c21f250>]

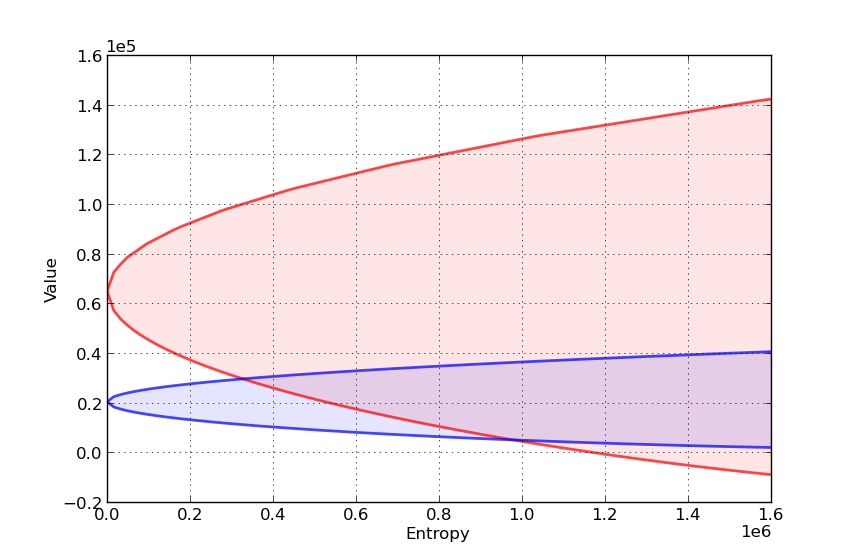

Here’s another such figure, with \(\theta = 0.002\) instead of \(0.02\)

Can you explain the different shape of the value-entropy correspondence for the robust policy?

29.8. Appendix#

We sketch the proof only of the first claim in this section, which is that, for any given \(\theta\), \(K(\hat F, \theta) = \hat K\), where \(\hat K\) is as given in (29.8).

This is the content of the next lemma.

Lemma. If \(\hat P\) is the fixed point of the map \(\mathcal B \circ \mathcal D\) and \(\hat F\) is the robust policy as given in (29.7), then

Proof: As a first step, observe that when \(F = \hat F\), the Bellman equation associated with the LQ problem (29.11) – (29.12) is

(revisit this discussion if you don’t know where (29.29) comes from) and the optimal policy is

Suppose for a moment that \(- \hat P\) solves the Bellman equation (29.29).

In this case, the policy becomes

which is exactly the claim in (29.28).

Hence it remains only to show that \(- \hat P\) solves (29.29), or, in other words,

Using the definition of \(\mathcal D\), we can rewrite the right-hand side more simply as

Although it involves a substantial amount of algebra, it can be shown that the latter is just \(\hat P\).

Hint

Use the fact that \(\hat P = \mathcal B( \mathcal D( \hat P))\)